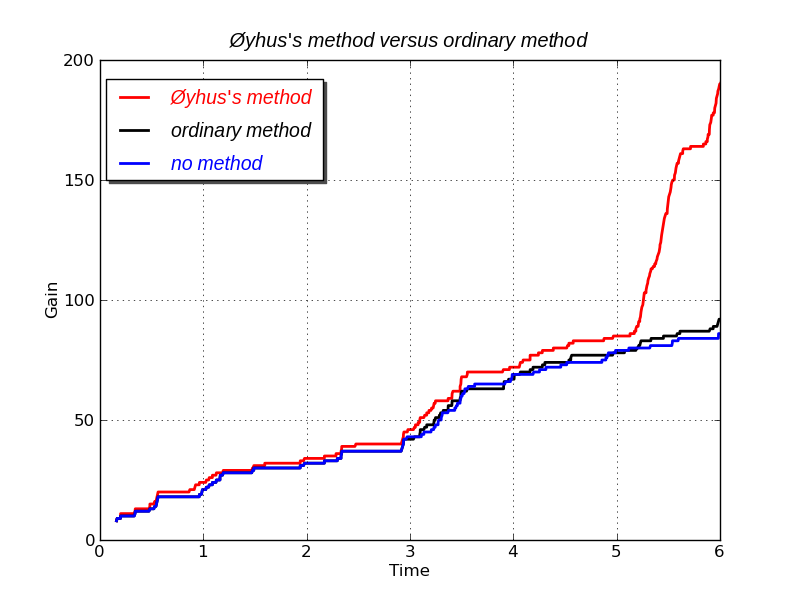

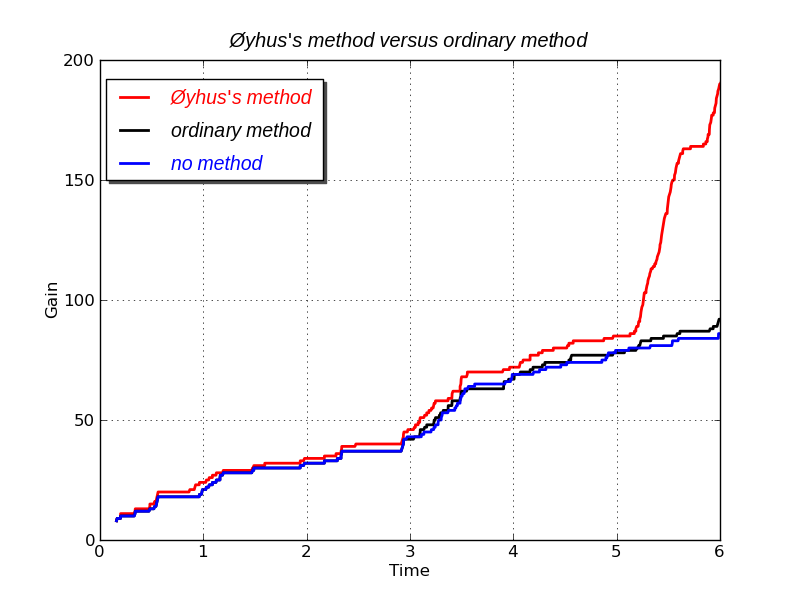

It's actually optimization of many forms of research, but my clients want market research, such as from Google AdWords, email campaigns, and similar, so that's where I got data. Here is my method versus an ordinary method, applied to some real marketing data.

In this case there is 100% gain from my method with the same amount of marketing as the normal method. The gains are sums of individual responses to eight different email campaigns. These could otherwise be sales, click-throughs, new subscribers, etc.

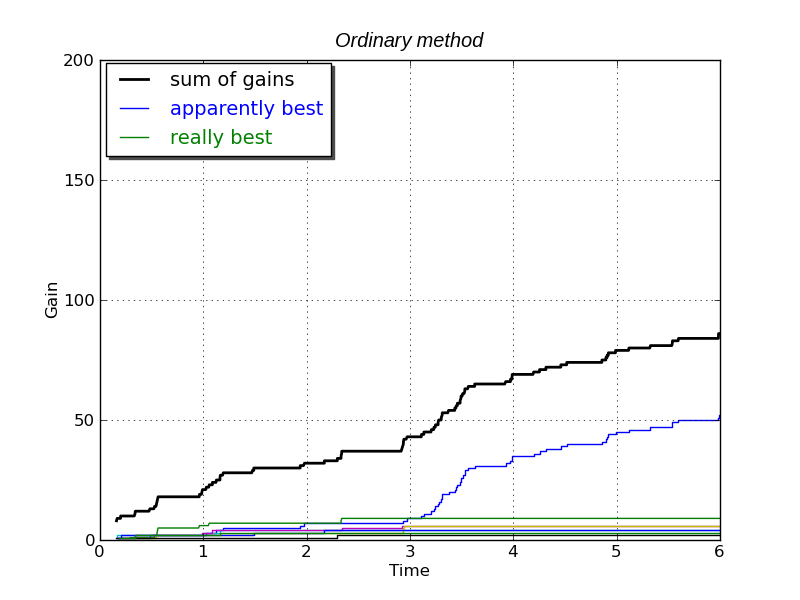

This results in a conflict between the cost of testing and the

greed for profit.

While testing, resources are used on campaigns

that are not the best, and some that are actually bad. But if the testing is

stopped too early, one will not know for certain if the best campaign

has been found, and will usually end up using a worse one. Avoiding

the best campaign will definitely result in less gain, typically

significantly less, because the best campaign tends to be

significantly better than the second best.

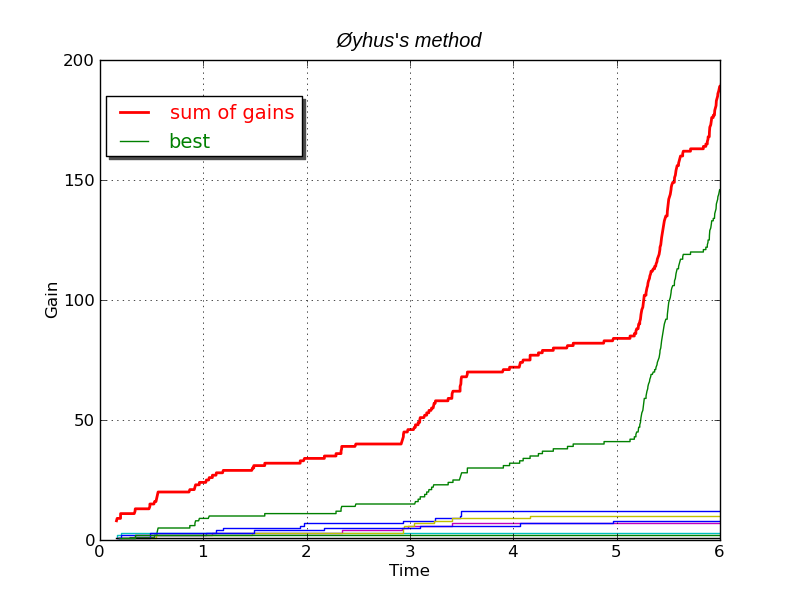

My method solves this problem by optimizing the balance between testing and profit.

However, the blue is not really the best one. The green is the best. This method chooses the wrong campaign because it stopped testing too early. Had the test run for 2-4 times longer, then the best campaign would surely have been found, but that would remove a lot of the profit from day 3 to day 6; likely half of it.

Even though it is mistaken, this ordinary method gives gains over using a random campaign, or over using all eight of them. In this case about 100% more gain.

Choosing the wrong campaign is quite typical and most likely. There are simply too few responses gained to get any certainty. At day 3 there are just 1-8 responses for each campaign. Each response is a person reacting to the marketing campaign, and these reactions can be seen as steps in the curves.

It gets more information and more profit, and gets it sooner.

Even with a total of just 45 respones at day 3, it has figured the

green campaign as likely the best one.

At day 5 my method really kicks in. Certainty of the best campaign is

reached here. The others are dropped.

There is no distinction between testing and using campaigns here, because my method views testing as a means to gain more profit, and uses this to automatically balance the curiosity of the testing with the greed for earning profits.

If this had been medicine, the gain could have been lives saved. In oil

prospecting it could have been wells found. Etc.

It accelerates research when each theory must be tested several times,

and one wants to find the best one.

By Kim Øyhus (C) 2011.7.4

I have done some experiments to see what happens if I have competing ads there, and it chooses one ad over the rest, but not the best one, as my system do. AdWords actually misses by a large margin.